ArtEmis: Affective Language for Visual Art

- Stanford University 1

- LIX, Ecole Polytechnique 2

- King Abdullah University of Science and Technology (KAUST) 3

- LIX, Ecole Polytechnique 2

Abstract

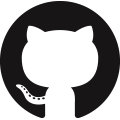

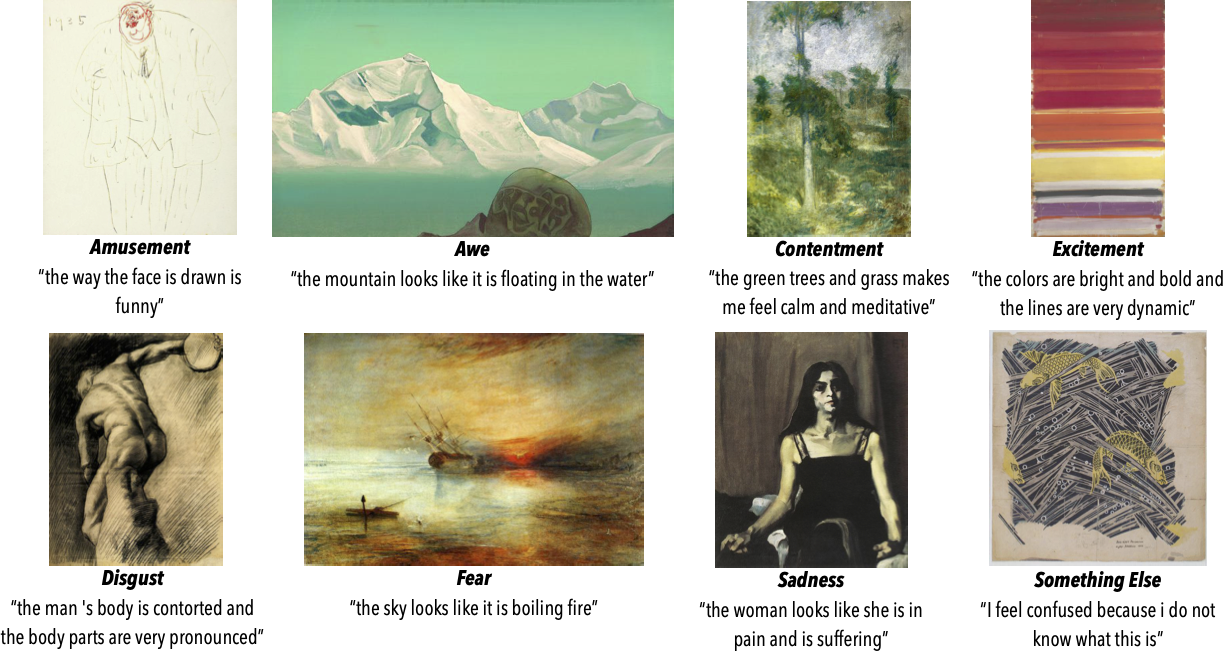

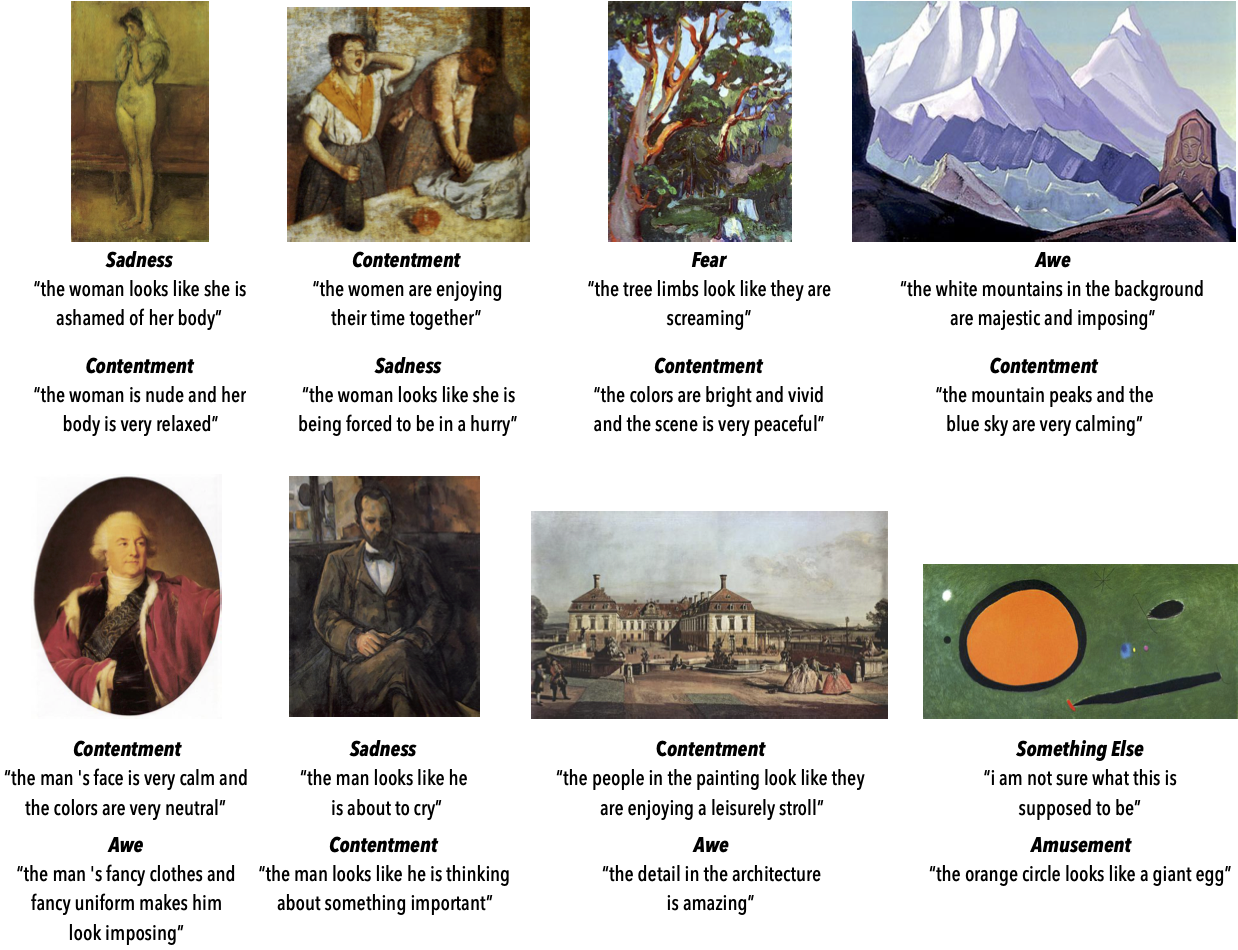

We present a novel large-scale dataset and accompanying machine learning models aimed at providing a detailed understanding of the interplay between visual content, its emotional effect, and explanations for the latter in language. In contrast to most existing annotation datasets in computer vision, we focus on the affective experience triggered by visual artworks and ask the annotators to indicate the dominant emotion they feel for a given image and, crucially, to also provide a grounded verbal explanation for their emotion choice. As we demonstrate below, this leads to a rich set of signals for both the objective content and the affective impact of an image, creating associations with abstract concepts (e.g., “freedom” or “love”), or references that go beyond what is directly visible, including visual similes and metaphors, or subjective references to personal experiences. We focus on visual art (e.g., paintings, artistic photographs) as it is a prime example of imagery created to elicit emotional responses from its viewers. Our dataset, termed ArtEmis, contains 455K emotion attributions and explanations from humans, on 80K artworks from WikiArt. Building on this data, we train and demonstrate a series of captioning systems capable of expressing and explaining emotions from visual stimuli. Remarkably, the captions produced by these systems often succeed in reflecting the semantic and abstract content of the image, going well beyond systems trained on existing datasets.

Qualitative Results

Videos

in a 8 minutes!

License

The ArtEmis dataset is released under the ArtEmis Terms of Use, and our code is released under the MIT license.Dataset

- To download the ArtEmis dataset (~455K annotations) please fill out this form, accepting the Terms of Use.

Browse

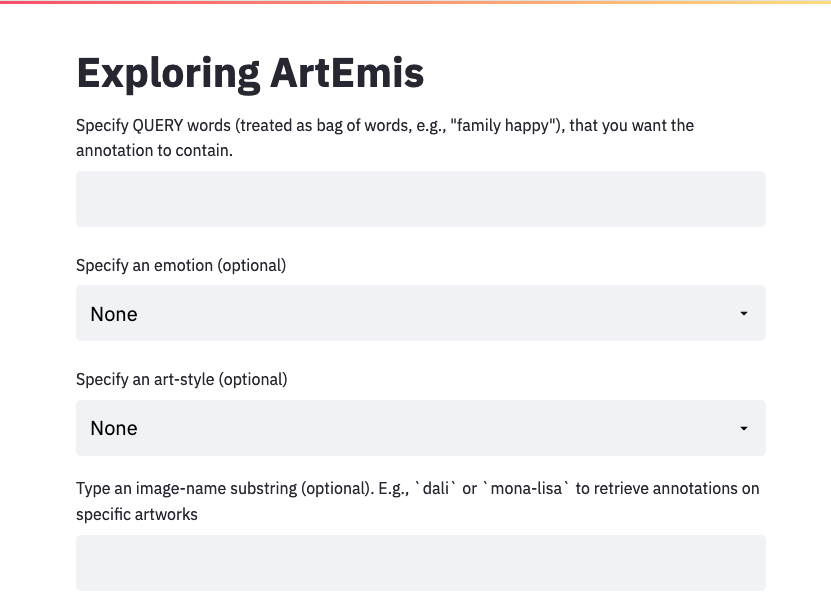

You can browse the ArtEmis annotations here.

Citation

If you find our work useful in your research, please consider citing:

@article{achlioptas2021artemis,

title={ArtEmis: Affective Language for Visual Art},

author={Achlioptas, Panos and Ovsjanikov, Maks and Haydarov,

Kilichbek and Elhoseiny, Mohamed and Guibas, Leonidas},

journal = {CoRR},

volume = {abs/2101.07396},

year={2021}}

Contact

To contact the authors please use artemis.dataset@gmail.comAcknowledgements

This work is funded by a Vannevar Bush Faculty Fellowship, a KAUST BAS/1/1685-01-01, a CRG-2017-3426, the ERC Starting Grant No. 758800 (EXPROTEA) and the ANR AI Chair AIGRETTE, and gifts from the Adobe, Amazon AWS, Autodesk, and Snap corporations. The authors wish to thank Fei Xia and Jan Dombrowski for their help with the AMT instruction design and Nikos Gkanatsios for several fruitful discussions. The authors want to emphasize their gratitude to all the hard working Amazon Mechanical Turkers without whom this work would not be possible.